What are

/r/cloudcomputing's

favorite Products & Services?

From 3.5 billion Reddit comments

The most popular Products mentioned in /r/cloudcomputing:

The most popular Services mentioned in /r/cloudcomputing:

Amazon Web Services

Amazon Elastic Compute Cloud

Shadow

DigitalOcean

Amazon Simple Storage Service

Apache Guacamole

Nextcloud

Notion

git-annex

Parsec

Boxcryptor

VNC Connect

PingPlotter

Paperspace

Splunk

The most popular reviews in /r/cloudcomputing:

So I would recommend aws, but p much any cloud provider will do.

Note: If you have your code in git this is a fairly easy process. If not you’re gonna have to fiddle with ssh/scp/sftp to get your code on to the system.

Steps:

- Create aws account if you don’t have one

- add a credit card to the account as your gonna need a ec2 (virtual machine) with a GPU it sounds like. Those cost money. Ensure you check out the pricing sheet

- navigate to the EC2 page

- click “launch instance”

- go thru the setup wizard

- Make sure you choose the correct instance type

- ami: can just be Amazon Linux or Ubuntu or Debian etc

- keep hitting next for most of the options

- make sure you allow port 22 from your IP address or a cidr containing your IP. Otherwise you won’t be able to communicate with this box

- make sure you download the key pair otherwise you won’t be able to access your box

- Launch

- Wait

- ssh into the instance

- Install the programming language and any tools or dependencies you need

- Get your code onto the box. Either do so with git or sftp etc

- Install program dependencies. Compile it if necessary

- Run it

- Don’t forget to term you instance when done or you may get a nasty bill.

If you're used to a full server environment, AWS's EC2 has some light weight options that are fine. There will be a lot of documentation that you can ignore, most of the cloud is built around not just breaking away from the datacenter, but also breaking away from the full server. The T2 series are fantastic for exactly your use case.

But, if you're running a simple, singular process, consider learning about serverless cloud computing like Lambda. Rather than having a server, you just upload your program and AWS determines how its run. No more server. Check out the FAQ Java section to get started. You'll probably have some documentation to get through, but this will be the cheapest option assuming your algorithm is light.

Is the company moving away from the islands? Or is a matter of a single employee giving up?

Without knowing the details of what is not working, it can be a bit hard to help. But if it’s a regional issue (with the Canary islands being blocked), you could try to set up a virtual machine in AWS / Azure / GCP and use that as your workstation. Look into tools like paperspace or parsec for connecting to your computer.

Here is a recent example of a migration off: https://about.gitlab.com/2016/11/10/why-choose-bare-metal/

There are others.

That said, private cloud only is a dead strategy, hybrid cloud is the smart bet right now for the enterprise, multi-public is emerging and is the future, IMO.

There's a few ways to go.

Use a cloud provider to host only your DR environment(s). You'd need solid high speed connections and could use an instance per server/app/however you organize it. Use your favorite backup/sync tool to copy the data over as frequently as you want it.

For AWS there is Storage Gateway and AWS has virtual tape libraries for backups. Here's their DR page.

You can use AWS instances as available DR environments with a cloned configuration. Either restore from backups or you could have setup real time sync.

Some vendors offer cloud DR appliances now. They can be used onsite or in the cloud (a physical appliance or a virtual one.) This operates like an offsite DR location. The appliance handles the backups/data sync operations and simplifies the switchover in case of a crash. An example would be NetApp's AltaVault.

You could use a vendor's private storage offering. This is typically for large organizations.

For small needs there's Dropbox or iCloud or Google Drive. Sync those and you have a backup of your data.

I checked it out and it seems like a neat solution, thanks.

It looks like arbitrary code can be run as long as it is statically linked so no worries there, just need to be wrapped with a few lines of Node.js like you said: https://aws.amazon.com/blogs/compute/running-executables-in-aws-lambda/

2vcpu 8 GB for under 50$ with Windows 365, but yes still geared for a business user...

More here

Thanks for the correction. I'd forgotten about their reduced-redundancy options too: http://aws.amazon.com/s3/pricing/

Their sales reps tell me that there is still 'plenty of margin' -- even on storage.

I'm not aware of any predictive study but historically speaking, in the last 10 years, AWS has only ever dropped their prices to reflect reductions in their operating costs: https://aws.amazon.com/blogs/aws/category/price-reduction/

If it requires and GPU power then shells will not be enough, you want to look at https://shadow.tech/en-gb/ although it is far more expensive you are getting a decent computer and a dedicated GPU. Just out of interest would you mind sending the game?

Have you considered either GPU servers in the cloud

https://aws.amazon.com/blogs/aws/in-the-work-amazon-ec2-elastic-gpus/

Or GPU Workspaces if you're after a desktop experience?

https://aws.amazon.com/blogs/aws/new-gpu-powered-amazon-graphics-workspaces/

Thx. I actually thought there might be some cheap options out there. Shadow (https://shadow.tech/) starts at 13 Euro per month. Unfortunately they cannot offer the service until summer for new signing ups (obv. due to the high demand and missing hardware). So I was looking for alternatives in a somewhat comparable price region. AWS (and I guess GCP) really look expensive. If. I need to pay more than 50 bucks per month I might as well buy new hardware myself.

We literally started last week, so prepare to be a little underwhelmed until early March, but here's the super rough start. I'm mostly interested in ML/AI pipeline set ups given my line of work, so I really could use help thinking of topic ideas outside of my myopia. I've got a full-time paid researcher on it now so you'll definitely get a response:

Our current aims: https://www.notion.so/worldfirsttech/Development-Epics-1cbeeaaa692b4af1822d572e069d467e

Here's where we are keeping research links of interest (a terribly unorganized list): https://www.notion.so/worldfirsttech/a058b27ff0b0458bb6990bbea8b9a2b6?v=7af0140b0fc645e3864581c7f1fdae8a

Golem would be the cheapest if you can get your software integrated with it. The guidelines are here: https://golem.network/use-cases/. Basically, it's a network made up of spare compute capacity from data centers and individuals. People who use the Golem network can go through www.golemgrid.com and simply pay with a credit card.

AWS x1.32xlarge has 128 cores and as a spot instance it's currently only $1.92 per hour in the Sydney region. If you're currently paying $2.085 per hour for an on-demand c4.8xlarge it might be something to consider upgrading to. Same price but 4x the amount of cores, which might bring you down to 10 seconds for your workload.

A question, is there's any chance you could rewrite your usecase to take advantage of GPU's? The GPU's on AWS p2 and g2 instances can do a lot of data crunching, if you can make your usecase fit a GPU

What is your location and where are your cloud servers? What are your ping times? I'm in London and I often use US-based instances and they are not really distinguishable from a server on my own LAN, while using SSH. When I started over 10 years ago I played around with instances as far away as Tokyo and the connections would have only got faster since then.

If you have SSH connections dropping, I highly recommend using mosh. I use it in laptops and my iPad, where I can even close the lid and reopen it hours later and still maintain the connection. You will not the way certain things redraw the screen is a little different when using mosh, but if you use tmux within it, it's the same as straight SSH. You only need to use it on your client and your bastion host and then use SSH from there.

Ah, no you won’t be able to trace UDP so easily. If on Windows you might want to use PingPlotter: https://www.pingplotter.com

If your Ubuntu game server is running ssh/22 or another TCP port you can test that port and the latency should be about the same for TCP

If you go with Syncthing, you can use Boxcryptor Classic which uses EncFS at the core, or EncFSMP.

Create an encrypted folder using EncFS and setup Syncthing to watch that folder. Only the mount the folder on trusted devices. Syncthing can only see, and thus will sync ust the encrypted files to whatever devices you add to the network... for example your VPS. Should your VPS be compromised the data is unusable without your passphrase.

You can build a system that gives you more security than Bittorrent Sync while also giving you more control over the individual parts. Let EncFS do the encryption and let Syncthing do the syncing. Let each app do one thing well. (the unix way!)

Hope this helps!

You could use AWS Lambda Scheduled Functions (since i assume you don't want to host infrastructure elsewhere from your Jenkins comment) - https://aws.amazon.com/blogs/aws/aws-lambda-update-python-vpc-increased-function-duration-scheduling-and-more/

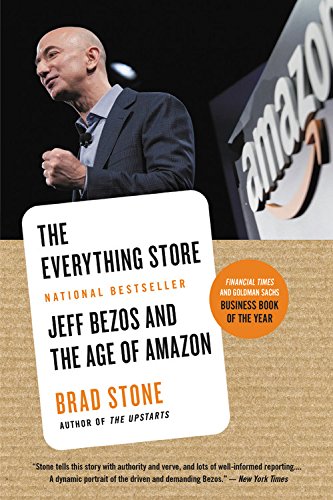

I would highly recommend the book on Jeff & Amazon called "The Everything Store" for a history on Amazon up to the last couple of years and it gives a good look at the company's history. It is light on AWS, but you can get a look frame work that was built and how the beginnings were forged. I really enjoyed it on Audible.

Disclaimer: I am a current Amazon employee and none of my opinions represent Amazon in the slightest.