What are

/r/vjing's

favorite Products & Services?

From 3.5 billion Reddit comments

The most popular Products mentioned in /r/vjing:

The most popular Services mentioned in /r/vjing:

Resolume Avenue

Gumroad

MPEG Streamclip

Vimeo

Plane9

Vuo

IGTV

VirtualDJ

Cross DJ

Processing

Soundflower

tonymacx86.com

Clonezilla

Ableton Live

MyPublicWiFi

The most popular Android Apps mentioned in /r/vjing:

The most popular reviews in /r/vjing:

For under 300: save up. These days you want something 3k or brighten in lumens, I recommend 5K or more for anything with house lighting/small venue. I use a 6k as my regular. Laser or DLP projectors are going to give you the best contrast and darkest blacks while LCD will not. If you're doing any sort Trompe-l'œil geometric illusions you want the darkest blacks.

This is what I use and I wouldn't recommend anything less if you're trying to get paid gigs.

If you are looking to only do stuff indoors with no ambient lighting to just study projection mapping then something 3K lumens will be fine but I would say anything less than $300 is going to cost you as an investment in the long run.

EDIT: Also check out 6 month no interest financing from Amazon Credit Card/Paypal. I've used this successfully to make big purchase and split it out so I wasnt at risk. If you miss a payment though you end up paying full interest. I just make sure I pay off and watch my balance.

xSense allows you to do just that, run around to your hearts desire. But whenever there's impact some drift is added, and you need to recalibrate often.

BUT, compared to the artifacts from optical solutions, I definitely prefer drift.

With optical solutions, if a marker is lost, the avatar's arm might suddenly shoot right through the head.

This is a funny video on what bad mocap looks like: https://vimeo.com/28501846

I'd rather have drift in mocap than sudden random motion any day...

I've seen that skrillex used the xSense live to drive a huge Unity3D avatar, I'm sure more people have but that's what I know.

Also, any inertial solution is the same, the only reason I keep saying xSense is it is the only one I've experience with.

If you are only planning on triggering events from detected gestures, ok, you might get away with optical marker based tracking. But don't drive an avatar with it...

I've published this under a creative commons licence: https://creativecommons.org/licenses/by-nc-sa/3.0/

You can download from vimeo if you like.

I just ask that you credit me (idk how you do this in a live set, but please figure out a way!)

Basic rule of thumb is if you are making real money on this you can't use it (or contact me to licence). If you are using it as part of an art project you aren't getting paid for, or are getting paid pennies for, go ahead. Just please credit me somewhere.

A mosaic (or video wall) controller is what you're looking for. They are still pretty expensive. They fall into the broader category of "scan converters".

A single-channel scan converter will take an input signal, usually do some processing to it (colour correction/freeze frame/zoom) and output the signal in the same or possibly a different format/connector. Logically a video wall controller would be 9 of these in the same box, set to zoom into a different area of the input signal.

If you have a desktop, and enough slots, the Eyefinity 6 card has 6 Mini Displayport outputs which you can convert to VGA - maybe with your existing card you can stretch to 9 outputs.

Maybe look into using a raspberry pi? Video walls are possible with VLC (pdf) or RTSP

Resolume is free to download (it throws a watermark on the screen every minute or so until you buy a license, but it's a minor nuisance if you're just messing around), and also has really solid free training on the website ( https://resolume.com/training ). I highly recommend starting there. I have very little background with any kind of visual work, but a month ago I downloaded Resolume, started grinding through the training, taking notes, and practicing everything I learned, and I've already gotten to the point where I'm making stuff that I'm really proud of. From what I've seen from a month of obsessively reading through posts on here, pretty much everyone uses Resolume.

https://resolume.com/download/

Scroll down to the bottom and find the version of 5 you want from the dropdown box. Email Resolume about the key, if you're already a customer they should take care of you.

Looks like resolume is coming up with a solution but it caught em off guard and it's going to take a bit

http://resolume.com/forum/viewtopic.php?f=12&t=15950

https://resolume.com/support/en/no-dxv-export-in-adobe-cc-2018

Here you go:

https://resolume.com/manual/en/r4/controlling#audio_analysis

So you first need to plug in your audio-in line (or onboard mic would do, too) and then choose 'External' - just check your Preferences so that the correct audio device is recognized. I find that you sometimes have to relaunch Resolume after changing the audio driver option, but other than that it's really quite simple.

Personally I like Machete. It's fast and lightweight, and does lossless cuts if you do your splices/cuts at keyframes (i.e. doesn't have to re-encode the video, so no quality loss).

It might be possible to capture the stream into XSplit, the output of which appears as a source in resolume. You want the broadcaster version of xsplit, and you don't need to be streaming the output for it to appear in resolume.

I believe a program for Windows called MIDIOX can do this, although I use Mac so i haven't used it myself. http://www.midiox.com

For people who need a solution for this on Mac, there is a program called MidiPipe

A bit late I guess, but before I switched to Magic Music Visuals I did exactly this (projecting pre-rendered videos and switching between them via MIDI) using freeware:

https://sourceforge.net/projects/glmixer/ and https://www.bome.com/products/miditranslator/overview/classic

Using GLMixer, I loaded all the videos in a folder and set up one session per video. With the key combinations "ctrl+pgup" and "ctrl+pgdn" you can switch between sessions, and you can choose a transition method (I just used "fade to black") for the switching. I then set up Bome's to translate MIDI events from two pads on my MIDI controller to the key combos above. This has been rock solid and stable for me over multiple gigs (~10). The limitation is of course just that it only works for "static" video files and any FX/manipulation etc that the software is capable of is very hard to control without touching the PC.

Macbook pro 15 inch with a GPU? Is your content converted to DXV?

All of your content needs to be in DXV so it can run through your computers descrete GPU. If you don't use DXV resolume will be unstable, and if you don't have a GPU you can only run a layer or 2 at most anyway.

HAP codec is probably the most used format among VJs for not bogging down resources as much as say PhotoJPEG. However, Resolume software, one of the more popular programs, does not support HAP. Instead they developed their own codec called DXV. The current version is DXV3.

You can download HAP codec here: http://vdmx.vidvox.net/blog/hap

You can download DXV3 here: https://resolume.com/software/codec

It would be nice if we could just get a more uniform dimension size, codec, frame rate, naming scheme for VJ clips.

Download the DXV codec if you haven't already. (It's included with Resolume so if you have Resolume on that machine, it has the codec) Get MPEGStreamclip (http://www.squared5.com). It's your friend.

It will take pretty much any format and transcode it to DXV. Import the DXV into Resolume.

I suggest you not go for Davinchi resolve and instead try to use and support tools made by and for the community like Olive (I recommmed and use version 0.1) and Kdenlive.

First of all because Davinchi is not no strings attach free, it's software is advertised on its website as free, but in reality it it's locked at 720p. To unlock 1080p or above you have to purchase a license from a reseller starting at $295. So not free, and not really usable at 720p, especially for streaming video or projecting. Oh and IMO Resolve is jut an add for Blackmagic cameras so there's that.

And the programs I mentioned are free in the literal sense of the word. All the features at no cost. OBS is actually made with the same model as Olive and Kdenlive, with volunteer contributors making awesome software for the community to do great things with.

I assume you do not have access to an external sound card.

The common approach is to use Soundflower to route system sound into a virtual "microphone" that can be used by Audio Analysis.

Soundflower is a small free app that creates virtual inputs and outputs in System Sound. They also show up in Audio Analysis as inputs. The trick is to route the system sound into Soundflower, making you able to playback the music on your iMac's speakers as well as getting a clean input into Audio Analysis.

Install Soundflower, and install SoundflowerBed as well. SFB is not necessary, it's just a nifty GUI for the menu bar that I prefer to use (you'll read why below). You may need to restart the system post-install.6

In System --> Sound, set your Output to the new "Soundflower (2ch)". Repeat for Input, also setting it to use "Soundflower (2ch)".

What is happening is that all system sound (music, app sounds etc) will play through the virtual Soundflower (2ch) output. And instead of using the default microphone input, we get clean sound from the source, as the output is routed directly into the input.

The downside is, no sound will play through the internal speakers with this setup. The sound playing through non-existing speakers (and recorded through a non existing microphone). My workaround is to use SFB to simultaneously route the Soundflower output through the speakers. Run SFB, click the icon on the menu bar and choose and output from the drop-down menu. It's probably something like "Internal Speakers".

I don't think it would be possible, and it must be why he's using a dedicated set of seperate decks for just that. Just seems like an odd omission on the hardware manufacturer's part, especially since so many set-ups nowadays are gonna be purely electronic and require some method of syncing between units.

edit: I'm assuming you meant get the timecode out while simultaneously using the decks to play music. If you just meant use decks to get a timecode signal out to control something like Traktor Scratch, all you'd need is a timecode cd like the ones available to download and burn for free here.

You can do it without any external hardware.

I've made my laptop a wifi hot spot using MyPublicWifi and then connect your controller to that.

That means you don't need any internet for it to work.

What are you using, phone, tablet, ipad..?

You say you have been using touch osc, how was it working already?

Reposting from the comments ;)

I’m one of the developers of Shoebot, an app similar in purpose to Nodebox, using Python as well, but multi-platform (Win, Linux, Mac); it is also free software (GPLv2).

If you are interested, give it a go; the url is http://shoebot.net

Ooh you're gonna love this. Processing is based on Java and it's basically made for visual artists and the like.

It is very easy to write stuff like this, in fact your clock with milliseconds can be written in one line. This one will give the time until the next occurrence of midnight:

text (nf(24-hour(),2)+":"+nf(60-minute(),2)+":"+nf(60-second(),2)+"."+nf(int(1000-System.currentTimeMillis()%1000),3).substring(0,2), 10, 100);

There are a few other steps you would need to take though, after installing the Syphon library:

- Create a nice big font

- Make a PGraphics object for the syphon server and set its size to whatever you want (your projector resolution probably)

- init the syphon server

- render your text using the line above to the Syphon server's PGraphics object

Download the processing IDE and have a look through the examples, then install the Syphon library (Sketch -> Library -> Add Library...) and check out the examples from that. PM me or continue the thread if you want any help.

EDIT: changed code to actually do what you wanted, duh

​

But i agree with onlyanothertom that a projector is the easiest and cheapest way to go about this.

Adding to this, I've tested numerous USB HDMI capture cards like this one. A bit pricier, but the one I would recommend is the Elgato Cam Link

Something like this? https://www.amazon.nl/Salley-Video-opname-videogames-videoconferenties-meerkleurig/dp/B095HVGWJV/

No idea if this particular capture device is any good, but there are a bunch of USB and hardware hdmi cards. The signal usually appears as kind of a webcam source in Resolume

I'll just throw in my stream of thought: Your biggest nemesis is latecy. To minimize latecy, you need "discrete" solutions. For example, you set-up the ddj-400 on the Mac, the output from the ddj could go to audio interface such as a Scarlett solo, which will connect your desktop PC to the audio and connect the speakers for playback. Then the desktop would be connected to the displays. Could work, might be able to use a software solution, but the latency usually isn't ideal. Good luck!

Thanks! All the loops were made in After Effects and I used Resolume to mix them.

Of course can get the loops for yourself to play with here

While these are for sale I follow a pay what you can policy. So if you’re interested and can’t make the price work, let me know, we’ll see what we can work out.

Synesthesia is a great program indeed, lots of possibilities and jaming I use it in combination with Resolume Arena

If I should make something the crowd could interact with, I would use RestAPI to setup a website the crowd could goto via smartphone and mess around with parameters or change clips.

​

Its actually not so difficult: https://resolume.com/support/restapi

I don't know if this is what laserpilot was getting at, but I've been experimenting with capturing existing designs, such as paintings, and projecting them back onto themselves. They become augmentable objects in a way. For example, I'd like to return to this chunk of the Berlin wall and augment the graffiti again, but with distortion controlled by pedestrians walking past on the adjacent sidewalk. You could do this to any wall on your campus and engage passing students with the gamut of interactivity techniques.

If your after less abstract scenes like iTunes has then you can see if my Plane9 fits you. Just select sample from microphone in the configuration window. If it doesn't fit the bill then I suggest you go with dcurry431 suggestion.

Vertex shaders aren't actually that fun (Program that are run for each vertex in a polygon). The real fun start with pixel shaders. They are programs that are run for every pixel in a polygon. A few example can be found at my Plane9 scenes page. But it's when you see things like a realtime glowing spike ball that is created in a single shader that you understand just how powerful shaders are. Oh and yes, all scenes move to the music also.

If you don't need the outputs it's always better to have only one card in your system. Resolume only renders on the card that is displaying the interface window (so never plug your monitor into your on board graphics output), and pushing pixel data between cards takes time.

Unless you have a very good reason to use them (i.e. frame lock / gen lock), I would ditch the workstation components. A current gen i9 with a current or last gen graphics card (2080, 3080) will outperform your current system by at least 2-3 times. Get 32 or even 64 gigs of memory and you have yourself a beast. Of course the same is true for the AMD/Radeon equivalent.

You didn't ask about many outputs but this is a very good read nonetheless:

https://resolume.com/support/en/lots-of-outputs

There's so much you can do with Resolume.

This thread on the Res forum has dozens of examples of skilled users creating visuals using no source clips, only the built-in effects engine.

You can download each user's composition and deconstruct how they did it.

Resolume has a free program that lets you convert image and video formats into DXV3 and you can further customize the settings such as alpha channels, sizing, audio (if it has any), etc. Here's the link and you can find it towards the bottom of the page "Resolume Alley" https://resolume.com/download/

I'm going to guess that any freeze or skips are a cpu or gpu performance issue on your Resolume computer. I assume that you're generating SMPTE from a stable external device and that there's not a skip or freeze problem with the SMPTE input.

You should probably make sure that you're running at optimum performance before synching to SMPTE, and make sure that you don't have a fundamental problem. This might help: https://resolume.com/support/en/preparing-media

Once you get the system running optimally, then see if you're having a sync issue. Make sure you've read this page and that you're sure you've chosen the right SMPTE framerate: https://resolume.com/support/en/smpte

You can use resolume for that. Out of the box it works with midi input data, you just need to assign midi to the parameters you want. Routing the midi data from logic to resolume shouldn’t be to difficult, at least it isn’t from Ableton. https://resolume.com/support/en/midi-shortcuts

Thanks for that. There's a lot of that in the industry. Can't say it doesn't hurt, but I have lots of xanax. I understand that lots of people don't like breakcore, and don't fault them for it. This guy was out of line though, and I've literally never seen him contribute to this sub before.

As for OSC, it's a relatively simple network protocol that's addressed similar to HTTP or SOAP/REST APIs. You can see all the resolume addresses by going to mapping and selecting OSC. It's similar to mapping midi controls, except instead of training resolume to read the control, you're training the control to talk to the addresss.

​

Here's resolume's guide: https://resolume.com/support/en/osc

This is easy to do and there are many different ways you can do it -- you could assign a midi button to the bypass button of that effect, you could map a fader/knob to the opacity of that effect, or you could put the effect directly into the composition and trigger that slot.

​

The official Resolume training has good training on using a MIDI controller -- scroll down to 6.2 and 6.3: https://resolume.com/training

Actually, maybe pass on the TripleHead. It was the DataPath I was thinking of. Resolume's documentation talks a little bit about it here. It basically acts as a monitor with a large output resolution to your GPU, and you can configure the DataPath itself to split that large output into smaller chunks. I've pushed data through two of them at a club I used to work at, but never configured one myself so I don't know all the details.

What resolution projectors? The main thing you're going to need to keep in mind is the maximum resolution of the graphics card you go with. For example, the max on the RTX2080Ti is 7680x4320, which means theoretically you could run 16 displays from it at 1080p. I've never attempted that, so there are probably some technical issues you would run into with that, be it performance or something else.

The other thing you would need to consider of course, is outputs. Most likely your best bet there would be to use something like a Matrox TripleHead. I would avoid multiple graphics cards if possible, IIRC there can sometimes be syncing issues and Resolume (which I assume you're using) has some limitations with multiple GPUs.

Resolume has much more MIDI capability than what’s credited here. It can assign MIDI values to almost anything, including variable slider values with mapped ranges. Ranges can honor note velocities. Every note/cc/channel combination can be specifically mapped and controlled and ranged, and each map can be saved/swapped out based on anything, including MIDI triggers themselves, allowing for endless flexibility in how the MIDI signals are applied to controls.

Many drum machines are capable of sending MIDI on more than a single channel, often with different instruments (parts of the kit) on different channels. At a minimum, some software in front of Resolume could re-channel any output from the drum machines. That’s not going to be the limitation here. It’s certainly much less limited than fft response which can only isolate freq ranges, and can’t separate a kick from a low tom, for instance.

I don’t know what you’re referring to as “gate” in the MIDI spec (maybe you mean CV gate?) but there isn’t a gate in the core MIDI standard.

There’s a good overview of Resolume’s MIDI mapping capability here: https://resolume.com/support/en/midi-shortcuts

I know it gets said on every post, and I know it's expensive - but I'm gonna be that guy to recommend Resolume.

You have the hardware, and a residency. I don't see any reason why you shouldn't be bringing an A-game performance, or at least developing one over time.

A slowly growing (but growing!) community over at /r/VJloops would love to help you find materials to work with. Once in Resolume you can boost those visuals into a new dimension worth of unique creative output, though I would also flag that garbage in; garbage out. AKA, you've still got to supply creative artistry rather than just mashing the effects. But do mash the effects, we all had to get it out of our system at the start.

Best of luck! There are a heap of materials on Reddit and the internet to help you get started with Resolume. Once you start really getting in the groove, make sure to share one of your shows with us!

You can just run an aux cable from the audio out on one to the audio in on the other.

As for video going back, it's a little more difficult.

If you have a video capture device, you could send video back over an HDMI port. Look for streaming capture devices designed for consoles, like the mirabox. I don't know how much delay you'll get with that, though. When you introduce hardware that does processing on that video, the delay will vary based on the size of the video.

Spout and NDI are network video protocols. Their delay will be higher than HDMI. (HDMI delay is 3-5ms, where network protocols could be as high as 50ms or as low as 10ms.) However, since I don't know what kind of delay a mirabox or other video capture device will introduce, It might be 20ms delay, or it might be 100ms delay, I don't know if you will get better performance from one over the other.

Luckily, as far as video hardware goes, a stream capture device is relatively cheap. You can pick one up for less than 150 dollars.

NDI and Spout are built into resolume automatically. https://resolume.com/support/en/syphonspout

Apparently, there is a plugin for OBS that adds NDI support: https://obsproject.com/forum/resources/obs-ndi-newtek-ndi%E2%84%A2-integration-into-obs-studio.528/ Had no idea and that's super useful to know.

Based on this new information, I recommend you try using NDI first. Spout is the most compatible and expedient, but NDI usually has better performance. Since you can test this for free, it's a no loss scenario. If the delay is too high, get back to me. I've got a few networking tricks, and we can look at some capture cards to see if we can get better results.

a computer with multiple outputs and cables of equal length, GPUs will output frames at the same time and the sync is fine. Even when running 2 standard GPUs the sync will be pretty much fine, however with installs and high end clients you would use Datapath FX4s(4k to 4x 1080p outputs) or Nvidia Quadros with a sync card to make sure your outputs are perfectly in sync when you need tons of outputs.

Yes, best to use Kineme3D or the v002 Model Loader plugins to do that, but out of the box you can also use the Mesh Importer which supports .dae file.

Check out Vuo too, it is far more smarter with 3D objects: https://vuo.org

I'm using two similar setup s:

The DJ is sending his mixing table sound to his audio capture card connected to computer :

1) AUDIO ONLY : We use an icecast2 self-hosted server and a software called butt on his computer to let him stream his audio to my computer

2) Audio + Video : He adds his audio capture card on OBS and webcam then stream it to a private RTMP server (using restream.io pro to get one, otherwise it can be self hosted too)

On my VJ / stream computer I just open the audio only stream with VLC player, i also got a physical loopback on my audio apture card so the output go in input for resolume to be able to work with.

I then just work in resolume via FFTT and sending to OBS for finally stream to twitch.

If I can get another video source (like webcam) to play in Resolume with FX on, I need to launch another OBS instances using NDI plugin, playing the same VLC but while monitoring it in OBS audio so you get the output. You'll just have to install Obs NDI plugin and activate it to get it as a source in resolume :)

But care doing all those stuffs on same computer can be costful in term of performance :)

I hope this help you, feel free to ask anything else :)

You can use max msp to get audio reactive visualizations that you can import into resolume. A lot of patching and hard work. or just go with what u/artnik suggested:

>Have you considered splitting the audio off into a separate file and importing that?

That should be easier :P

Sounds like the disk copying hasn't worked.

A boot disk is set up differently than a standard disk.

Copying just the data won't copy the boot sectors.

Might be an issue with 2 boot drives being present if they aren't set up correctly, which is why I normally uninstall or wipe the old drive.

Obviously, uninstall the old drive - to make sure the new drive is booting correctly - before wiping the old one.

Again, this is why I normally take it as an opportunity for a fresh install.

But I've never had clonezilla fail to clone a disk before.

https://clonezilla.org/show-live-doc-content.php?topic=clonezilla-live/doc/03_Disk_to_disk_clone

Just grab one of these and a projector of choice.

https://www.amazon.com/gp/product/B00MQGCLUI/ref=ppx_yo_dt_b_asin_title_o05_s00?ie=UTF8&psc=1

Load up a USB stick with MP4 video of your choice of visuals you download.

​

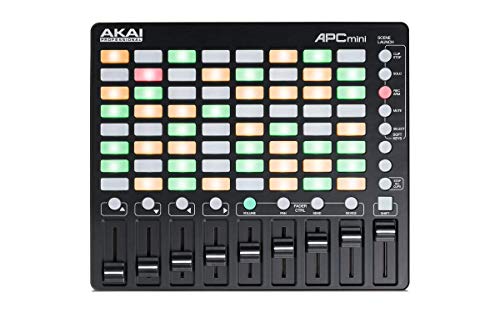

AKAI apc40 mini is a good cheaper version of the regular apc40

Something as simple and inexpensive as a Micca Speck might do the job for you. They are relatively versatile, and Amazon sells them in the $40 range - https://smile.amazon.com/Micca-Full-HD-Portable-Digital-Player/dp/B008NO9RRM/

With inexpensive devices like these, though, I recommend getting backup units in case of issues.

I've been in the same boat and finally settled on this model.

120 inches from 4 feet away and it's cheaper than anything comparable by over $100.

https://www.amazon.com/Focusrite-Scarlett-Audio-Interface-Tools/dp/B01E6T56EA

so like this one? And I would just need an xlr audio output and input through usb into my computer? Sorry if I'm a bit daft :)

Its linked in the post :)

https://www.amazon.com/Optoma-X600-Lumen-Network-Projector/dp/B00GGGQHHC

I used this one for two small tours on the east coast with no problem. Stacking 2 of them has been perfect for dealing with in house lighting issues

I've been wanting one of these ASUS ZenScreen MB16AC 15.6-Inch Full HD IPS Monitor

https://www.amazon.com/dp/B071S84ZW7/ref=cm_sw_r_cp_apa_w5cFAbDNX244P

I have used 7" portable DVD players with A/V inputs before. You can use just as a monitor, or source and prolly find them cheap at secondhand stores these days.

Do you want to record your sessions with a video camera (at home) if so, then buy a 3LCD projector, if not then the Optoma GT series are on of the brighter and cheaper Short throw projectors. https://www.amazon.com/Optoma-GT760A-720p-Gaming-Projector/dp/B012G1AVTA/

Less than $500 and easier to mount, pickup and put down almost anywhere. If you want a standard throw projector there are some others I have my eye on.

best short throw for the price and specs that I found is this one.

It's DLP but a)I don't think any of the animations I'll be projecting move bright whites quickly and b) if it does... it might look badass actually.

Out of curiosity though, what spec would I need to look at to see how bad the rainbow effect will be?

I asked my friend who does visuals (only a lurker) who answered with this:

>in this set up >computer (coge) is being fed into this video converter https://www.amazon.com/Tendak-Composite-S-Video-Converter-Upscaler/dp/B01FJKWP7G?th=1 >then that and the critter both are separate inputs into the mixer >mixer outputs to preview monitor and to projector

https://www.amazon.com/Magewell-HDMI-Video-Capture-Dongle/dp/B00I16VQOY/ref=sr_1_8?ie=UTF8&qid=1515444376&sr=8-8&keywords=video+capture+card+hdmi >that is the coge-recommended capture card >class compliant

Hope that helps!

i.e. something like this but with an hdmi to rca converter as the input?

I don't have personal experience with it, but the AVerMedia Game Capture HD2 looks like a good option. Works as a stand alone box with HDMI in and through, records to an internal HDD without needing USB for data or power. one drawback is that it can't be used as capture into a PC, unlike other AVerMedia products.

Like the one you linked it's under $150 but it's not clear if it includes a HDD, so might be worth a look.

Here's a link to a Scan Converter that I've used. VGA to RCA. It's cheap and should give you feed to the TVs (I assume that they are CRTs)

I just picked up this optoma gt1080 on amazon for $620. It was available on Prime Now also which kinda sealed the deal as I needed it quickly.

I painted right onto the console, but it was a light grey to begin with. Just guessing, I think a white base polish would work better because that's what a top layer nail polish is made for.

Glow in the dark polish definitely looks cooler, but a USB gooseneck lamp is also worth considering.

The most important question is how much you are taxing the GPU; you need to get a card that will handle it.

From the point of view of outputs, you would either be looking for a pair of cards or a single card with many outputs like this one.

One thing to be aware of - Sometimes cards have outputs that can't be used simultaneously: for instance my 6870 has a DVI and HDMI port like that. So just because the card has 5 outputs don't assume you can use em all at once. (I'm pretty sure the one linked above is an exception).

I recommend the VS230 http://www.amazon.com/Epson-VS230-Lumens-Brightness-Projector/dp/B00GMGDFPI/

This one sits in my living room mostly, and even sees the occasional gig. Works flawlessly and colors are super bright. Resolution is decent, but obviously not HD

I made a version for android phones by now, you can download it here:

https://play.google.com/store/apps/details?id=com.workSPACE.Fraksl

In case you don't have android there's also a webGL version that you can play in your browser, but it's only hosted on dropbox and misses some functionalities:

https://dl.dropboxusercontent.com/u/36126087/build/feedback.html