What are

/r/hardware's

favorite Products & Services?

From 3.5 billion Reddit comments

The most popular Products mentioned in /r/hardware:

The most popular Services mentioned in /r/hardware:

Newegg

AnandTech

Tom's Hardware

EVGA PrecisionX 16

PassMark CPU Benchmarks

Engadget

Best Buy

Greasy Fork

GSMArena

eBay

TechNet

ZDNet

Synology Photo Station

Linux kernel

Super User

The most popular Android Apps mentioned in /r/hardware:

Linux Deploy

Unified Remote

Microsoft Word

GMD Full Screen Immersive Mode

Microsoft Remote Desktop

Android System WebView

Grand Theft Auto: San Andreas

Kore, Official Remote for Kodi

Sentio Desktop (Lollipop, Marshmallow)

Twomon USB - USB Monitor

DroidCamX Wireless Webcam Pro

AppChecker - List APIs of Apps

LCD & AMOLED-Test

Nougat / Oreo Quick Settings

Wifi Analyzer

The most popular VPNs mentioned in /r/hardware:

The most popular reviews in /r/hardware:

One of the VLC developers commented on Hacker News:

> VLC does not (and cannot) modify the OUTPUT volume to destroy the speakers. VLC is a Software using the OFFICIAL platforms APIs. > > The issue here is that Dell sound cards output power (that can be approached by a factor of the quadratic of the amplitude) that Dell speakers cannot handle. Simply said, the sound card outputs at max 10W, and the speakers only can take 6W in, and neither their BIOS or drivers block this.

And if 2.0 installation fails, there's a way to get it to install on 1.2 and 1.2 has been around since 2011...

https://winaero.com/how-to-install-windows-11-without-tpm-2-0/

Note: Situation may change in the future.

tl;dr Comes with two of the new NF-A12x25 fans for push-pull and has 37% more fin surface area. Noctua claims it can match 140mm coolers in performance and that it has 100% RAM compatibility with all LGA1151 and AM4 motherboards (no overhang), though it will require RAM shorter tham 42mm for LGA2066. MSRP of $99.90/€99.00/£89.95 on Amazon/eBay.

530$ for R5,8,256 nvme, FHD screen.

Basically the baseline for a good laptop these days. With tariffs , it could go up to 700-800..

Yeah, I noticed this as well.

Deal aggregators like Slickdeals are covered with Ryzen processors.

A couple I found notable:

I get why Summit Ridge might see deals, as Pinnacle Ridge is probably ~3 mo away.

However, I'm not sure what's going on with Threadripper. It only just came out about 3 mo ago, so it's not even close to being replaced. And since Threadripper is based on Epyc, I bet the earliest we could see a replacement is in 2019 when 48-64C Rome drops.

As long as general consumers can't tell the difference, the rebranding will keep going.

Recently came across a listing of a retailer selling a gaming desktop with a GTX 1060 3GB, FX-6300, 120GB SSD, and 16GB DDR3 for $800. Hey, they cut the price from $900.

https://www.amazon.com/SkyTech-Oracle-Computer-Desktop-Motherboard/dp/B071R6HW52

> May 4, 2018: In my opinion this is the very best deal, on any site

> January 3, 2018: 10/10, would recommend

> June 10, 2018: No Issues. Works as it should at a fair price.

> March 10, 2019: Great machine

Reminds me of my friend that bought an i3-7350K and a Z270 mobo in 2018 after buying into a sales person's pitch hook, line and sinker. After the i3-8350K had already launched.

EDIT: My parents bought a Pentium 4 laptop sometime after Intel launched the Core series as they had bought into the "MHz myth". That thing was a mobile space heater and a mini jet engine.

No, they never promised to open their drivers. They even explicitly said that their binary blob (fgrlx) will probably never be opened up. Instead, they did exactly what the Linux community asked them to do: release hardware documentation of all of their graphics cards. "We can write the drivers ourselves!", said the Linux community. Turns out that writing graphic card drivers is hard, especially considering all the opengl hacks which are needed to function properly. So after releasing the hardware documentation, the moaning shifted to complaining about "AMD not developing an open-source driver". And so they did. They hired people to work on the open-source initiative full-time, which paid off. In kernel 4.2 for example, 36.8% of the changes in the kernel were made by AMD alone.

Not only that, but AMD has been a major player in userland where it rewrote a lot of code (setting the stage so to speak) in mesa an other software packages. Saying that 'opening up' only happened as of lately is wrong, and it irks me. The Linux community is an entitled bunch, which will never stop complaining even when companies do exactly what they asked for.

A RX 580 will do 30MH/s at 150W overclocked and undervolted which is still profitable depending on power cost.

The massive farms buying up all the GPUs are located in countries and regions that pay less than 10c per kWh.

Also, use this calculator: https://www.nicehash.com/profitability-calculator#

It factors in many alt-coins. Plenty of miners aren't mining ETH and instead mining other more profitable coins.

Yeah, SSD prices are the one area where we're seeing a significant drop. Just as an example, Amazon is selling 240GB Crucial BX300s for $38 today. According to Anandtech, the MSRP for the drive at launch in August 2017 was $89.99.

A few things to point out.

The most disappointing video card was between the Radeon VII and the 1650. Here's the thing. No one ever gets excited for low end budget cards to begin with, and I don't recall Nvidia hyping up the 1650. The RVII, on the other hand, was announced at one of the biggest shows of the year with AMD giving 3 benchmarks that showed it beating the RTX 2080. Then it comes out and is 8% slower, without all the rtx features, and suffers from all the AMD/Vega problems. At the same price. That's a disappointment beyond what the 1650 could ever put out. The 1650 feels completely shoehorned in.

Now the Rx 5700 got the best award for enthusiasts because of the powerplay mod and flashing for that 10% boost to performance to match 5700XT levels while consuming Rx Fury levels of energy. That's silly considering that most gpus can overclock to ~10% performance uplift using whatever overclocking software you choose. They shouldn't get an award for that, especially with all the Navi driver bugs.

Edit: I just thought of something after seeing a good black friday sale. The 1650 is available in plenty of budget friendly laptops out there, with one being $600 right now.. It's the go to entry level laptop gpu, and to say it's a disappointment on the same level of the "first 7nm gaming gpu [to be discontinued]," well, that's just a lack of perspective. Having worse price to performance alone shouldn't put it on the list as AMD often has no choice to price their video cards lower to compete with Nvidia. For good reason. Expectations and hype, in my opinion, should be the deciding factor.

>The Atomic Pi is available on Amazon US for US$34 and can be shipped worldwide

Ya but it's not

Also this implementation looks janky af, the power supply is on a separate board? No chassis of any kind available?

I'd be really interested in one of these if I could buy one with a basic little chassis and a power supply, but from the Digital Loggers site it seems like the best they have to offer is the unit itself with a separate power supply board so it accepts a 2.5mm power adapter which you appear to have to supply yourself. Why is the 2.5mm jack not on the board itself? Why can't I buy a compatible chassis and power adapter at the same time?

Hello everyone,

The way the EVGA GTX 970 ACX heat sink was designed is based on the GTX 970 wattage plus an additional 40% cooling headroom on top of it. There are 3 heat pipes on the heatsink – 2 x 8mm major heat pipes to distribute the majority of the heat from the GPU to the heatsink, and a 3rd 6mm heatpipe is used as a supplement to the design to reduce another 2-3 degrees Celsius. Also we would like to mention that the cooler passed NVIDIA Greenlight specifications.

Due to the GPU small die size, we intended for the GPU to contact two major heat pipes with direct touch to make the best heat dissipation without any other material in between.

We all know the Maxwell GPU is an extremely power efficient GPU, our SC cooler was overbuilt for it and allowed us to provide cards with boost clocks at over 1300MHz. EVGA also has an “FTW” version for those users who want even higher clocks.

http://www.evga.com/images/forum/precision_gtx970sc.png

Regarding fan noise, we understand that some have expressed concerns over the fan noise on the EVGA GTX 970 cards, this is not a fan noise issue but it is more of an aggressive fan curve set by the default BIOS. The fan curve can be easily adjusted in EVGA PrecisionX or any other overclocking software. Regardless, we have heard the concerns and will provide a BIOS update to reduce the fan noise during idle.

Thanks, EVGA

It would be trivial to check for someone with a test rig. Go buy some for and see. That's 25 leafs for $45. Given the size of the leaf you could cover several cpus with one.

Generally you don't need much thickness at all, in fact the thinner the better. I am just not sure that gold is actually pliable enough to fill in any micro imperfections the way liquid metal would.

Everyone interested in this topic may find the comments here by David Chisnall interesting. He was the chair of the J extension group but left due to how the foundation operates.

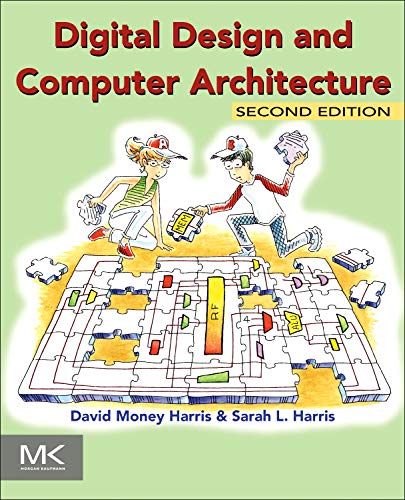

Would probably be better to post this to [r/ECE](/r/ECE) rather than to Hardware, but either way:

Shortly summarized: A MOSFET is a 3-terminal device (4 if counting bulk, and there's also a 6T type), where you have Gate, Drain & Source. Assuming you know BJTs, you can "map" them as:

- BJT - MOSFET

- Base - Gate

- Collector - Drain

- Emitter - Source.

If again, we're comparing MOSFETs to BJTs, we can say that a MOSFET is a VCCS (Voltage controlled current-source), while BJTs are CCCS (Current controlled current-source). In other words, when you apply a voltage to the gate of a MOSFET you'll create a current at the drain of the MOSFET. The current that's generated depends of the operating region:

- Subthreshold (Voltage over Gate-Source are less than the threshold voltage; Vgs < Vth)

- Linear/Triode region (Voltage over Gate-Source are more or less than threshold, but Voltage over Drain-Source are less than the Gate-Source voltage; Vgs >= Vth, Vds < Vgs)

- Saturation region (Voltage over Gate-Source are more or less than threshold, and voltage over Drain-Source are more than or equal to Gate-source voltage; Vgs >= Vth, Vds >= Vgs).

Normally one operates in the the saturation region.

Not sure how much details you want, but if you want to read more about MOSFETs you've got books such as Sedra & Smith or Razavi

That's the definition of a Rumor.

An unconfirmed statement by an unconfirmed spokesperson, speaking about the unconfirmed actions of a separate organization.

Something to keep in mind when finding a Harddrive:

Some companies keep a long inventory, some companies keep a short inventory. Traditionally in the computer market, short inventory companies require higher volume and take advantage of the generally downward prices in the market. Sites such as newegg and amazon work this way.

However, in cases of a break in supply, they don't have much in the warehouse and can't get anything else. They also know that they will sell a certain number of drives even at much higher prices. Knowning that in the next few months they will only get X drives and usually sell 5X drives means they increase prices so they can make the most per drive since they can't sell any more drives anyway.

Long inventory companies such as BestBuy, Staples, etc don't have to do this. They will get a higher price per unit than Newegg usually does, but they have 3-5 months stock of these drives. They are better off it if they get more sales from this at their normal price than jacking it up and getting fewer sales.

Thus a WD 2TB drive at newegg costs $150: http://www.newegg.com/Product/Product.aspx?Item=N82E16822136772

The same drive at BestBuy costs $80: http://www.bestbuy.com/site/Western+Digital+-+Caviar+Green+2TB+Internal+Serial+ATA+Hard+Drive/9234465.p?id=1218064150518&skuId=9234465&st=Western%20digitial%202TB%20drive&cp=1&lp=2

You might be able to crack open the external hard drive and put it in a hard drive enclosure, or put it in your PC to see if it works. The ENCLOSURE that your portable drive uses might be broken, but the hard drive might NOT be. If you hear it spinning and trying to power up, then you still have a chance at recovering the data.

http://www.newegg.com/Store/SubCategory.aspx?SubCategory=92&name=External-Enclosures#

There are some(a lot) at microcenter, too. In case you live near one and need to do this for the ease of mind immediately.

Yep. Leaks today indicate Intel is on a hiring spree to move beyond their Core Architecture. It's already very long in the legs, they can't get anymore IPC out of it since Skylake.

Intel needs a revolutionary new architecture in the next few years, else Zen 2 and 3 may even eclipse them by a big margin on 7nm.

I'd go with the Mega Jumbo Rainbow Afro Circus Clown Adult Costume Wig, personally. You need all the surface area you can when it comes to these things, so as to reduce heat most effectively. Plus, the wide color variety is particularly eye-catching, which is an oft-overlooked feature of custom builds.

Yes it's so sad that these have to run macos. I've been keeping an eye on this project to get linux on m1 in the hope that i can use the hardware without the os one day.

- DirectStorage requires 1 TB or greater NVMe SSD to store and run games that uses the "Standard NVM Express Controller" driver and a DirectX 12 Ultimate GPU.

https://www.microsoft.com/en-us/windows/windows-11-specifications

Companies still make employees sign them (maybe because they use the same onboarding documents across sites in multiple states or to try to scare their employees) but they are unenforceable in California: https://www.huffpost.com/entry/understanding-californias-ban-on-non-compete-agreements_b_58af1626e4b0e5fdf6196f04

Such a sensationalist title compared to what the quotes are!

>Dell EMC is no exception, integrating AMD processors into workstations, all-in-one devices and even servers

> while AMD still absolutely has a place in the processor market, the number of different models offered by Intel means that there is little value in producing an AMD-powered variant of every product in its portfolio.

Doesn't make sense to make a million more skus considering development costs and support costs, no shit, especially considering IT departments have that same mentality of a few decades ago where "you don't get fired for buying IBM" except the cost difference now between the market leader and the new entrant is much smaller. Same with mindshare of consumers.

Why would I go with AMD when the midrange is so much better now? 8GB ram, 256GB SSD, and a quadcore i7 that boosts to 4ghz. Plus touchscreen, comes with a stylus (not high quality), incredible. The battery life still goes in favor of intel, atleast until more laptops come out with raven ridge. Shit you can get quadcore laptops with the 8250u and decent other specs for $500!

Depends honestly, they're probably referring to a VPN server and not a VPN client like the endless NordVPN spam.

In that case yes, a VPN is a great way to lock down a network while maintaining a level of external access, with the major caveat being you need to make sure the server is up to date. It's a lot harder to compromise something when you need to get through an OpenVPN server first.

Arctic released a 420mm variant of their Liquid Freezer lineup. It's also 38mm thick, like the rest of the lineup making it probably the most effective AIO on the market.

But yeah there are few cases that can fit it.

Edit: Here's a review from anandtech. Yes it is effective.

i432 was nuts but it's also a genuinely interesting architecture, and there are a few good papers on it for people curious about old timey architectural experimentation. Eg. Performance Effects of Architectural Complexity in the Intel 432.

You can also do the same on the low end.

$87 i3-9100F vs $80-$85 Ryzen 1600 that has been silently revised from 14nm to underclocked Ryzen 2600s. I suppose the only downside is that the new "12nm" 1600s use the 2600's stock cooler, which is worse than the original 14nm 1600's cooler, but in return, you get all of the 6C/12T Zen+ benefits. And you can OC the RAM on a ~$70 B450 board (~$60 if you don't need VRM heatsinks) as well to further crank up the IPC.

https://www.reddit.com/r/hardware/comments/dud1my/new_ryzen_5_1600_available_what_is_the_difference/

https://www.amazon.com/AMD-Processor-Wraith-Cooler-YD1600BBAFBOX/dp/B07XTQZJ28/ref=sr_1_3

I could see the arguments against the 1st gen Zen, but a Zen+ that can be OC'ed to match or exceed 2600's specs? I've seen someone argue that the i3 was still worth getting for gaming with the basis of "no one should be upgrading their PC shorter than 4-5 years between the builds/upgrades" and criticized my plan of upgrading from the 14nm Ryzen 1600 to a used/discounted Zen 3 chip when AMD moves onto Zen 4/5.

New 1440p 144Hz+ panels with Samsung's Quantum Dots or LG's Nano IPS. Bonus points if they include FALD. Realistically, I am hoping for a 165Hz refresh to this panel:

https://www.amazon.com/gp/product/B06XSQ5QN8?tag=rtings-tv-cm11b-20&ie=UTF8

from their faq

>Overall real world performance is something like a 300MHz Pentium 2, only with much, much swankier graphics.

The pentium 2 came out in 1997, and the deschutes core was released in 1998, and it ranged in performance from 266MHz to 450MHz.

So you'd have to go back to 1998 to get CPU performance similar to the Pi.

HOWEVER, graphics wise, it has performance akin to an Xbox360, which was release 9 years ago (2005).

There's a couple of things you could do. If you had two separate internet connections, you could use load balancing to get a faster connection by combining the two. Connectify Dispatch works great for that.

If you want a faster connection to your local network, you could use LACP teaming to bond the two connections together to make a 2Gbps virtual interface. You will need a switch that supports LACP though, and it's tough to take full advantage of this unless you have multiple client machines download files from your computer. Another benefit of this is if one port happens to fail, it will continue to run off the other port. Really only helpful for servers though that need 100% uptime.

Of you could turn the computer into a simple Pfsense router.

Or you could just bridge the connections in Windows making them act similar to a switch. Gives you the possibility to plug other ethernet devices into it, for example if you want to use your laptop simultaneously at your desk, you could plug it into the other ethernet port if your don't have a switch nearby.

You can probably find it cheaper if you did a bit more digging on Ali.

> one of the huge advantages of water cooling

air coolers have had the same feature for decades though. The solution is a fan shroud. Even my first computer 20 years ago had that. Like so https://www.amazon.com/Thermalright-100700771-Fan-Duct-120/dp/B00O24R774

Basically, the status bar in Windows will burn onto the screen. Windows by default uses relatively bright colors and persistent GUI features. Combine those two and that equals burn-in in a relatively short period of time.

OLED burn-in comes up, a lot of Redditors mention that their OLED smartphone doesn't have burn in issues. That's almost always not true and you can test it yourself using a gray background. If you have a persistent navigation bar, you definitely have that afterimage perma-etched into your display. It's just not noticeable because that burn looks like a nav bar.

For those interested, you can test for yourself whether or not your OLED has burn-in:

https://play.google.com/store/apps/details?id=de.lappe.tim.android.lcdtest&hl=en

EDIT: My six month old Pixel has burn in. Minimum screen brightness.

This is the product. It only has 10 reviews, so not enough reviews to make a conclusion.

I decided to run a different Netgear product through reviewmeta and it looks like it detected some shilling.

Holy shit EVGA will no longer provide ~~them~~ ^(the 2k generation of GPUS), no wonder the evga 2080ti models are going for $1.5k-$2.0k NEW/USED! I'm hoping that the 3080ti AIBs get released in Sept/Nov before the price gougers fuck everything up!

EDIT: Specifically mentioned that it was only the 2k generation of GPUs, and not NVidia GPUs altogether...

Why this stupid misleading title? Steam just removed Chinese cyber cafés from their survey which are almost entirely based on Intel and nvidia.

Edit: oh nvm. I thought it was your title. Guru3d doesn't actually know because they didn't do their research. Doesn't surprise me.

Edit2: Here. I guess some people just don't like to read text surrounding graphs, or they just want clicks from r/amd

Another solution is to build your own external HD.

Assembly is rather simple to do, and you get a external HD that you can be confident in that is what it should be: free space for storage without any nasty little surprises from Western Digital's suppliers/subcontractors.

linked items chosen for illustrative purposes only.

Verizon's moving away from CDMA, too. They even debuted their first LTE-only flip phone a while back.

Forget about the i5 for $20 more, at $160, you can go for the Ryzen 5 2600 for a mere $6 more. https://www.amazon.com/dp/B07B41WS48/

Unless you don't need a GPU at all or you do need a GPU but you just need GT 1030 level graphics, the Ryzen 5 2600 is the way to go in my opinion.

It is like HT in that one core looks like two, but it can actually do some of two things at once. Better than Intel's version of HT.

>A single Bulldozer core will appear to the OS as two cores, just like a Hyper Threaded Core i7. The difference is that AMD is duplicating more hardware in enabling per-core multithreading. The integer resources are all doubled, including the schedulers and d-caches. It’s only the FP resources that are shared between the threads. The benefit is you get much better multithreaded integer performance, the downside is a larger core.

http://www.anandtech.com/show/2872

As far as core counts go, see http://www.anandtech.com/show/2881/2

>Henceforth AMD is referring to the number of integer cores on a processor when it counts cores. So a quad-core Zambezi is made up of four integer cores, or two Bulldozer modules. An eight-core would be four Bulldozer modules.

Truly random RNG via hardware is a thing in classical computing. Probably the most famous method is by watching lava lamps.

If you're doing this professionally and want to save tons of time, consider renting a server. Even AWS, as expensive as it is, it's pennies compared to your hourly rate. For instance, you can rent c4.8xlarge, which runs on 18 cores, 36 vCPU, 60GB RAM, and it's only $0.4131 per hour (spot pricing).

Considering you can pick up a complete Tegra X1 system for $59 right now, I'd be kinda shocked if Nintendo is paying more than $20 for those chips at this point. Presenting the cost difference between a semi-custom AMD part and a six-year-old off-the-shelf Nvidia one as that trivial feels strange.

Do you know anyone that actually uses an external RNG?

I've always been interested in the idea of using something like this, https://www.amazon.com/TrueRNGpro-Hardware-Random-Number-Generator/dp/B01JTJ6D0S

FOR SCIENCE!

I decided to test this empirically.

Keyboards

Macbook keyboard - standard rubber dome + scissor switch mechanism

Razer Black Widow - cherry mx blue "clicky tactile"

PFU Happy Hacking Keyboard Pro 2 - topre capacitive

Method

I opened up typeracer, and a google docs spreadsheet to record my data. In one trial, I did one race on my laptop keyboard, one race on my HHKB and one race on my black widow. I did 15 trials.

Results

Laptop keyboard:

Average: 109.27 WPM Standard Deviation: 12.01

HHKB:

Average: 107.8 WPM Standard Deviation: 11.37

Black Widow:

Average: 105.4 WPM Standard Deviation: 11.88

Conclusion

For me, there was no statistically significant difference in speed between a mechanical and a laptop keyboard.

I'm going to break away for a bit here: from an EE perspective, this new mac pro is fucking cool. The heatsink design, the way they route the power (mini bus bars!), the fucking nuts high-speed gpu connectors and insanely dense flex cables, and all that computing power into something so small. I mean, it's proprietary and expensive, but it's cool.

You can get away with shit APs (just run local VPN if you think the encryption is worthless), but you do need a secure gateway. You can do it on the cheap too, just get a used thin client and put OpenWRT on it. Also as a bonus you can do advanced traffic shaping, which is still not even offered by commercial options.

There was a fair bit of SSD news at CES. Here are just a couple examples.

OCZ'z Vertex Pro 3 Demo: World's First SandForce SF-2000

Micron's RealSSD C400 uses 25nm NAND at $1.61/GB, Offers 415MB/s Reads

Late February-March is still expected. Unfortunately according to Anand it appears that SandForce will be missing out initially. >It’s looking like SandForce will be last to bring out their next-generation drive in the first half of the year with both Micron and Intel beating it to the punch, but if we can get this sort of performance, and have it be reliable, it may be worth the wait.

AMD is in a very good position when it comes to low-powered CPUs. Bobcat is by far the best architecture available at the moment and is actually good enough to run some newish games at minimal settings.

It's due for a die shrink in early 2012. These 2nd gen Enhanced Bobcats will have 1-4 cores and be paired with a Radeon HD 7000 series GPU. They'll consume upto 9W for the 1-2 core Krishna, and upto 20W for the 2-4 core Wichita. Intel's Atom simply can't compete with that and ARM can't even compete with Atom.

Barring any major problems with the 28 nm process (which would also effect ARM), AMD's Deccan platform (Krishna and Wichita) is going to be too good to ignore.

What I'm seeing from this platform is a mini-ITX gaming system more powerful than a PS3. Also, if I was going to get a Windows 8 tablet, it would most definitely need to be powered by Krishna.

Edit: The mini-ITX gaming system, is actually a project I've got lined up for next year. It involves a custom linux build and will basically be an open source console that's also a fully functional PC, so I'm slightly biased.

Easy Anti-Cheat also has a Linux port. The issue is Windows anti-cheat and Proton/Wine. You need a native Linux version. Even Valve's VAC won't work in Proton: https://github.com/ValveSoftware/Proton/issues/3225

I have Optane 900p 480GB which runs rings around any SSD currently on the market. They're telling me that my Optane is going to have trouble because it isn't 1TB?

What's this 1TB rubbish? Why would DirectStorage care so much about how much space you have on your drive? All it's doing is using the GPU to access data already occupying space on the hard disk.

The actual prerequisite documentation doesn't state anything about any 1TB requirement:

https://www.microsoft.com/en-us/windows/windows-11-specifications

All it says is "DirectStorage requires an NVMe SSD to store and run games that use the "Standard NVM Express Controller" driver and a DirectX12 GPU with Shader Model 6.0 support." and that's it.

I appreciate that, but I should be good. If the stock cooler is not up to par (it should be), I'll just pair THIS with one of my many spare Noctua NF-A12x25s.

This isn't really accurate.

Companies must innovate or risk other companies eating their lunch. HP were the key people who figured this out in the electronics industry back when they were a behemoth before the modern computer age and their current MBA leadership.

>We encourage flexibility and innovation.

> We create an inclusive work environment which supports the diversity of our people and stimulates innovation. We strive for overall objectives which are clearly stated and agreed upon, and allow people flexibility in working toward goals in ways that they help determine are best for the organization. HP people should personally accept responsibility and be encouraged to upgrade their skills and capabilities through ongoing training and development. This is especially important in a technical business where the rate of progress is rapid and where people are expected to adapt to change.

Bear in mind this is a way to act to achieve the goals they as CEOs had set, not a list of goals.

They had this as a key tenet of their business outlook, along with keeping margins reasonable to discourage competition and most CEOs are aware of 'The HP Way'. The book "The HP Way" was, and maybe still is, essentially required reading for people interested in running a business.

Good companies understand this. Those that don't risk losing market share because it gives competitors the opportunity to form and catch up.

There have been some articles indicating absolute worst-case, an unpowered ssd could lose data in just a few days if it's stored somewhere with fluctuating temps.

http://www.zdnet.com/article/solid-state-disks-lose-data-if-left-without-power-for-just-a-few-days/#!

Anand's take on this: http://anandtech.com/show/9248/the-truth-about-ssd-data-retention

edit: added worst-case verbage and Anand link.

edit2: make sure to read the reply from /u/arthurfm.

Anandtech has a significantly better review up of the Transformer prime and the Tegra 3 architecture.

Tons of benchmarks and data and prescient analysis.

A 2TB drive for under $250 is a pretty damn good start.

Of course we won't see 100% adoption, but I feel like most gamers can relegate their HDDs to storage drive duty and get a big ol' SSD as a boot drive.

Me, I've been 100% SSD since around 2014. My build was a hair over $1000 and I eventually poached an old SSD from my college laptop to be used as a data drive in my gaming desktop. It really hasn't been that hard.

The WNDR3700 is pretty flexible and feature packed. Two antennas (one 2.4GHZ, one 5GHZ), and a ton of features. Plus it runs DD-WRT. Plus the DD-WRT wiki says it has one of the most powerful antennas they've tested.

Samsung marketeers did a 24 SSD Raid 0 benchmark: text: http://www.tomshardware.com/news/samsung-ssd-hdd-raid,7224.html video: http://www.youtube.com/watch?v=96dWOEa4Djs

They maxed out at 2GB/s, PCI-E limit.

From HDTune, the ramdrive maxed out at 20 GB/s. So maybe a factor of 10x in terms of speed?

Jesus, blogspam. Three links later, we get to Techcrunch. Even better, here's the video.

1) Did you actually just link Softpedia as a credible news source!?

2) Seagate's manufacturing plants were not damaged by the flooding in Thailand

Just saying

Pat's been known as a man of faith for a long time, he even wrote a book about managing your career along with having time for your family and God: https://www.amazon.com/Balancing-Your-Family-Faith-Work/dp/0781438993

Nope, they cost like $40 (for m.2 NVME, 2.5" SATA is more like $30). $50 is nearing 500GB territory.

Edit: link for those interested https://smile.amazon.com/Inland-Premium-M-2-2280-Internal/dp/B07P6STQ54

Kinda disingenuous. If I bought this laptop the day it became available (Nov. 2015), I'd have gotten about 2.5 years support. Fermi had a ten year lifespan, but many devices using Fermi GPUs came out after day 1.

If you are into serious keyboards, I highly recommend going with mechanical switches. These are much more durable and have that oh so nice 'click' sound to a keypress.

Don't go with any Razer mech keyboards. While the switches are mechanical, the rest of the keyboard is quite flimsy and they break easily. Thats what you get with rushed production.

Having said that, the type of gaming you are doing will definitely influence the type of switches you should get.

My own rule of thumb: RTS - Brown switches FPS - Black switches

Heres a great article if you want to know more about it. http://www.tomshardware.com/reviews/mechanical-switch-keyboard,2955.html

I currently own a Flico Brown. It's the most satisfying buy I have ever made on any electronics.

Good luck.

that's going to get tougher and tougher. pascal (especially at 1080/1070) is mature enough.

This already exists:

https://aws.amazon.com/ec2/amd/

If demand for this service goes up, Amazon will simply buy more AMD servers. Over time they will reduce the number of intel servers. Simple supply and demand.

> There's also latency vs bandwidth considerations.

So overlooked! Decoding complexity/power is neither inherently good nor bad. Rather, it is an engineering tradeoff -- compact CISC single-byte instructions use less memory/cache bandwidth and can be packed densely into caches. The RISC/CISC war was ended by PentiumPro (aka P6) -- a microcoded RISC micro-architecture implementing a CISC architecture. All of Intel's mainstream desktop/laptop CPUs since then have been P6 derivatives (Atom is a separate story). AMD's Zen micro-architecture (and its derivatives) is a ground-up design based on the same model as P6: RISC micro-architecture implementing a CISC architecture. So pretty much all RISC/CISC debate since ~1996 has been moot.

The real question is what are the tradeoffs? If I make my caches bigger and reduce my core floorplan, what micro-architectural optimizations am I giving up? Obviously 90% cache / 10% core is wrong. But then 90% core and 10% cache is probably wrong, too. So you can't reason in absolutes. You have to run the numbers on real code, using a credible model of the design and see what the various partitions do to real-world performance. There is no shortcut. And there's a whole textbook written on that.

Looks like a rebrand of a currently available barebones

Considering that its Microsoft, Amazon, and Google that's moving on this issue... I think its a cloud security bug.

Cloud is different: servers run code from the customers, and rely on chip-level security to make sure that Customer#1 can't see the data of Customer #2. Imagine if you will, that you could see all the memory of all of the other customers by simply buying an AWS node and scanning memory around.

So consumers probably won't have to deal with this bug. On the other hand, Cloud Compute is where the money is right now. So maybe you'll care if you bought AMD or Intel stocks...

50% by what metric? CPU <> DRAM latency has barely improved over the last two decades.

(Yes, I know the i7 is faster in most applications, but not $800 faster, not even close.)

Here you go... KVM over ethernet

> But how do you even run it? Last time I had to use a crack to get it to even run without insta-crashing and then suffered from low fps.

If you are on 64-bit Windows, download the 64-bit binaries here.

Extract

Bin64to the game directory alongsideBin32Delete

Bin32and renameBin64toBin32

Now the Steam version should run without issue on a 64-bit version of Windows.

The PC Gaming Wiki page for the game has details on more tweaks/fixes.

It should be noted that this is basically the same thing but already out:

http://www.dx.com/p/meegopad-t01-quad-core-android-4-4-windows-8-hdmi-google-tv-player-w-2gb-ram-32gb-rom-eu-plug-361931

A more readable presentation with all the info and pictures, by a journalist with an EE degree:

>I mean, when it's in black and white directly from the company, how much are you to second-guess them or give benefit of the doubt. At face value 4A were talking about how it applied to their game.

Just because a company says something, doesn't mean it's absolutely 100% correct. They're people, they can make mistakes too.

Apply a bit of common sense before immediately trusting everything you read. Like, that's the most basic thing you can do when reading any articles on anything. I'm not asking you to immediately assume everything is wrong, I'm asking you apply common sense.

An i3 4330 scores about 20% better than a 965 while using less than half the power. Single-thread improvement is more like 70%. It's not a huge upgrade, but it's definitely an upgrade. If you let your PC run 24/7, the power savings will probably pay for the i3 by the time you replace it.

I've always wondered how such a machine would perform at gaming. Out of curiosity, why wouldn't it work?

Just for the sake of mentioning it: Current Benchmarks of Multiple CPU Systems

>If you are going to complain, do it about the cost of the consumable unit, not the amount of ink you don't get to use.

No reason users can't complain about both gouging AND deceptive business practices. HP got sued for this exact same practice, though the punishment was so small they probably considered their loss a victory.

The amount left in there is not for performance reasons, and it's not an accident they report ~20% remaining as 0% remaining. It's a simple money grab, they now have users purchasing ink with 20% more frequency.

They carefully picked an amount to leave that is somewhere between "too little to generate much profit" and "so much that nobody would believe it's accidental, or for benign reasons". That's why there's 20ish percent left and not 4% or 50%.

Scouring Newegg tells me you can get a dual G34 (Opteron) board for around $500 (http://www.newegg.com/Product/Product.aspx?Item=N82E16813151221), plus two bottom-of-the-barrel Magny-Cours CPUs (http://www.newegg.com/Product/Product.aspx?Item=N82E16819105266) for $275 apiece. You'll still be on the hook for a case and memory, but you should be able to manage that with the $500 you've got left in your budget.

Improving the processor will cost you dearly, though. Next step up is nearly double the price.

pcpartpicker has lots of European regions if you look at the drop down menu in the top right of the page.

Alternatively you can try geizhals.eu and pricespy.co.uk (they have other regions if you check at the bottom).

You guys are talking about the price of the LED's if you were to buy them separately from Amazon or something...

RGB versions of hardware are more expensive than non-RGB hardware. You don't pay just the wholesale cost of the LED's that are used, that's not how product pricing works...

https://www.amazon.com/Corsair-Vengeance-PC4-28800-Desktop-Memory/dp/B07RM39V5F/

https://www.amazon.com/Corsair-Vengeance-PC4-28800-Optimized-Memory/dp/B07TC4TPCN/

Same speed, same capacity (16GB 3600/C18), $30 more because of the RGB. $30 is 33% of the price...

This with the 3950X must be the best value on the workstation market, especially the compact WS market. Have you seen the four RAM slots on an ITX? 128GB RAM for 500 USD ( https://www.amazon.com/dp/B07N124XDS ) is pretty insane.

Pity they didn't put PCIe lanes in that OCuLink .

I've bought from https://www.overclockers.co.uk/ without issue and they sent candy with my purchase. Amazon does have it https://www.amazon.co.uk/dp/B01C0XZ9IW/ but you are gonna pay out the nose as this is a fairly old cooler now and seems to have limited stock.

They named it this way so they can mislead consumers. For example, this is a USB 3.1 product, but gen 1 or gen 2? And I've seen certain products charge higher for 3.1 gen 1 which is identical to 3.0.

3.1 gen 1 = 3.0.

There are already USB adaptors for N64 controllers: https://www.amazon.co.uk/Sega-Saturn-N64-Controller-Adapter/dp/B006ZBHXEO/ref=sr_1_3?ie=UTF8&qid=1514775901&sr=8-3&keywords=n64+usb+adaptor

I got a Buffalo G300NH for $70 a few months ago, to replace a Linksys WRT54GS that finally bit the dust. It comes stock with DD-WRT installed. So far it's reliable, and the wifi coverage is better than my old Linksys.

Yes, but it will be minor. Your processor/gpu are going to eat up the majority of power, and the wifi/3g/screen/bluetooth will finish the job.

I think you'll see greater gains in battery life by simply not wasting as much time. You may get 4 hours with both drives, but the SSD will boot in 20 seconds and the other in two minutes. You've got to account for these things when you consider the full scope of what you're asking.

I think it's harder to find counter examples - basically every single router that has been around for few years would have had a few hardware revisions. Fairly popular TP-Link Archer C7 had 5 revisions large enough to require different firmware images - different WiFi chipsets, CPU frequencies, ROM sizes, USB port count etc.

> can you play switch games on this?

Technically yes actually thanks to Yuzu. It also works quite well on existing handhelds like the GPD Win 3 (and has seen major performance improvements since this video as well)

>Cloud service providers won’t drop their prices because of cheaper CPU cores. The savings on the CPU cores in the grand scheme of things is minimal.

But you can get AMD cores 10% cheaper on AWS?

> FP16

Is provided at a larger scale by the P100 and V100. P4000 just needs enough FP16 performance to test an algorithm before dumping it onto a machine-learning focused server.

It's a 'nice to have' but it's really not the focus of the workstation grade GPUs. Because you can just fire up an EC2 P2 Instance at pretty much anytime if you need to run your machine learning algorithm.

>Gaming Drivers

Doesn't need them, it can play games on Quadro drivers just fine and it doesn't experience more than a 1%-2% penalty compared to the GTX 1070.

>AMD is positioning this as a Titan competitor

They absolutely are, which is why it really needs performance on both sides of the fence. It needs to work well enough in professional applications to not slow you down, and it needs to game near enough to the top of the stack to help justify it's enormous pricetag.

VEGA FE right now only has one of those bases covered.

I'm very far from an Apple Fanboy, I actively avoid Apple Products when possible, but a list of hardware is not that useful either. Whether or not you prefer a product or not, the best thing we have are benchmarks:

The iPad 2 consistently performs much better than the Xoom in every test. While on paper the iPad 2 may be "worse" once you run it, the iPad is a very well engineered machine.

Crazy floods in Thailand are making hard drives a scarce resource. As someone who works in the industry, I can say there are many companies scrambling to get their hands on whatever they can, which isn't much.

Edit: Here is an article

>the industry is starting to see ramifications from the major flooding in Thailand, resulting from heavy monsoon rains months ago. An estimated 45% of the world’s hard-disk drives are made there, and firms like Seagate Technology Inc. and Western Digital Inc. have had their manufacturing plants disrupted.

At Toms Hardware they have articles called Best Gaming CPUs For The Money: the last page has a Gaming CPU Hierarchy Chart. Might be useful to you.

I'll bite. Both Nvidia and Intel have a history of anticompetitive behavior, using their larger marketshare and higher revenue to force AMD out of a market. Intel beat the original Athlon (which was faster, cheaper, cooler, and used less power than the P4) by selling them at less than cost to OEMs who agreed to exclusively buy from Intel--they got fined $1.1 billion for it. They also got busted for their C compiler; when you built for AMD targets, it removed all optimizations so the performance was distinctly worse.

Nvidia gets a lot of their performance from drivers that optimize games that weren't written perfectly, which AMD just can't afford to do to the same extent. Not only that, they've published SDKs that deliberately performed badly on AMD cards, and all of their fancy technology is proprietary. They also gimped the normal GPUs' computer performance via drivers so enthusiasts who want to use their GPUs for things other than games have to buy the more expensive models.

Really, AMD is just the least evil of the three. They write open source tech; Mantle got merged into OpenGL (Vulkan), and instead of writing their own GPU compute library to match CUDA, they implemented an open standard (OpenCL). Nvidia and Intel might have better performance, but AMD is all around a better company for the consumer.

http://www.osnews.com/story/22683/Intel_Forced_to_Remove_quot_Cripple_AMD_quot_Function_from_Compiler_

http://arstechnica.com/series/the-rise-and-fall-of-amd/